Learning k8s and docker by deploying a go server

I have made several attempts at learning Kubernetes (k8s) and Docker, but all the documentation and tutorials I have read haven't really stuck with me. The YAML specifications often feel like magical incantations and I quickly forget what they really mean.

To learn the underlying concepts once and for all, I decided to deploy a minimal Go server on a local kubernetes cluster. In doing so, I wanted to understand the significance of every single command that I executed.

In this blog, I'll walk you through this process. I'll only include code and config that really matter, and explain exactly why we need something. I strongly encourage you to follow along, seeing your first pod running is a great feeling and it doesn't take that many lines of code. You can find the codebase here.

Here's what you'll need installed:

- go: to develop our server

- docker: to build images and run containers

- kind: to create a local k8s cluster

- kubectl: to interact with our k8s cluster

Let's get started.

Step 1. "hello" go server

Here's an implementation of a server in Go that simply responds with "hello". We include the hostname in the response, which will be useful later on.

package main

import (

"fmt"

"net/http"

"os"

)

func hello(w http.ResponseWriter, req *http.Request) {

hostname, _ := os.Hostname()

fmt.Fprintf(w, "Hello from %s\n", hostname)

}

func main() {

http.HandleFunc("/", hello)

fmt.Println("Server is listening on :8080")

http.ListenAndServe(":8080", nil)

}Let's setup the project:

> mkdir go-server && cd go-server && touch main.go

# copy contents to main.go

> go mod init go-server && go mod tidyNow compile and execute the server:

> go build -o server .

> ./server

Server is listening on :8080

In another terminal, run:

> curl localhost:8080

Hello from Kartiks-MacBook-Air.localStep 2. Containerizing our server

Our server works locally so let's take it to production! To handle production load and be fault tolerant, we will run multiple instances of our server on different machines. This raises a question: "How do we ensure that our server runs on a different machine exactly like it does locally?".

Containers

simplify this process by packaging your application and everything it needs to run as an isolated process.

Docker is a popular platform to develop

using containers and uses a Dockerfile to specify instructions for packaging your application

as an image. This image is executed by Docker as a container at runtime.

Our Dockerfile should describe steps to compile our server and execute it. Since Go can generate

statically-linked

binaries, we can reduce the size of our generated image by only including the Go toolchain

for compilation and discarding it at runtime. To do this, we will use a multi-stage Docker image.

Add the following to a Dockerfile in the same folder. I have documented what each line is doing

so you understand exactly why

we need it.

# Stage 1: Build the Go application

## The Golang image provides the runtime for compilation. We name this stage as "builder".

FROM golang:latest AS builder

## Setting the work directory runs subsequent COPY and RUN commands from /app.

## By convention, we put application content into the /app directory within the image.

WORKDIR /app

## Copy server code from our machine to the container.

COPY go.mod main.go ./

## Compile the server. Check note below.

RUN CGO_ENABLED=0 GOOS=linux go build -o server main.go

# Stage 2: Execute the binary

## Scratch is a minimal image with nothing installed.

FROM scratch

## Copy the compiled binary from the builder stage.

COPY --from=builder /app/server /server

## Document the port the server listens on. This does not add any functionality.

EXPOSE 8080

## Setting our compiled server as the entrypoint will launch it when we run this image.

ENTRYPOINT ["/server"]Note: We compile our server for Linux because Docker containers run natively on Linux. We disable CGO to generate a statically-linked binary that doesn't rely on external C libraries.

Let's build our image with the name go-server. In the same folder, run:

> docker build -t go-server .To verify whether our image was created successfully, run:

> docker images go-server

REPOSITORY TAG IMAGE ID CREATED SIZE

go-server latest 9d0cccc4966b 2 minutes ago 7.75MBLet's execute our image in a container. In one terminal, run:

> docker run -p 8080:8080 go-server

Server is listening on :8080This runs our docker image and maps localhost:8080 to port 8080 in the docker container, where

our server is listening for incoming connections.

Now, in another terminal, run:

> curl localhost:8080

hello from 6967d234abeeThere you go! Our server is now running inside a container and the host name we see in the response is the container id.

Step 3. Kubernetes

Containers make it incredibly easy to scale your application. Since our image contains our server code along with everything that it needs to run, we can easily spin up multiple docker containers and stack a load balancer on top.

Unfortunately, containers are meant to be ephemeral and can stop, crash or be replaced at any time. If you require 3 replicas of your application running consistenty, you will need to monitor container health and trigger restarts.

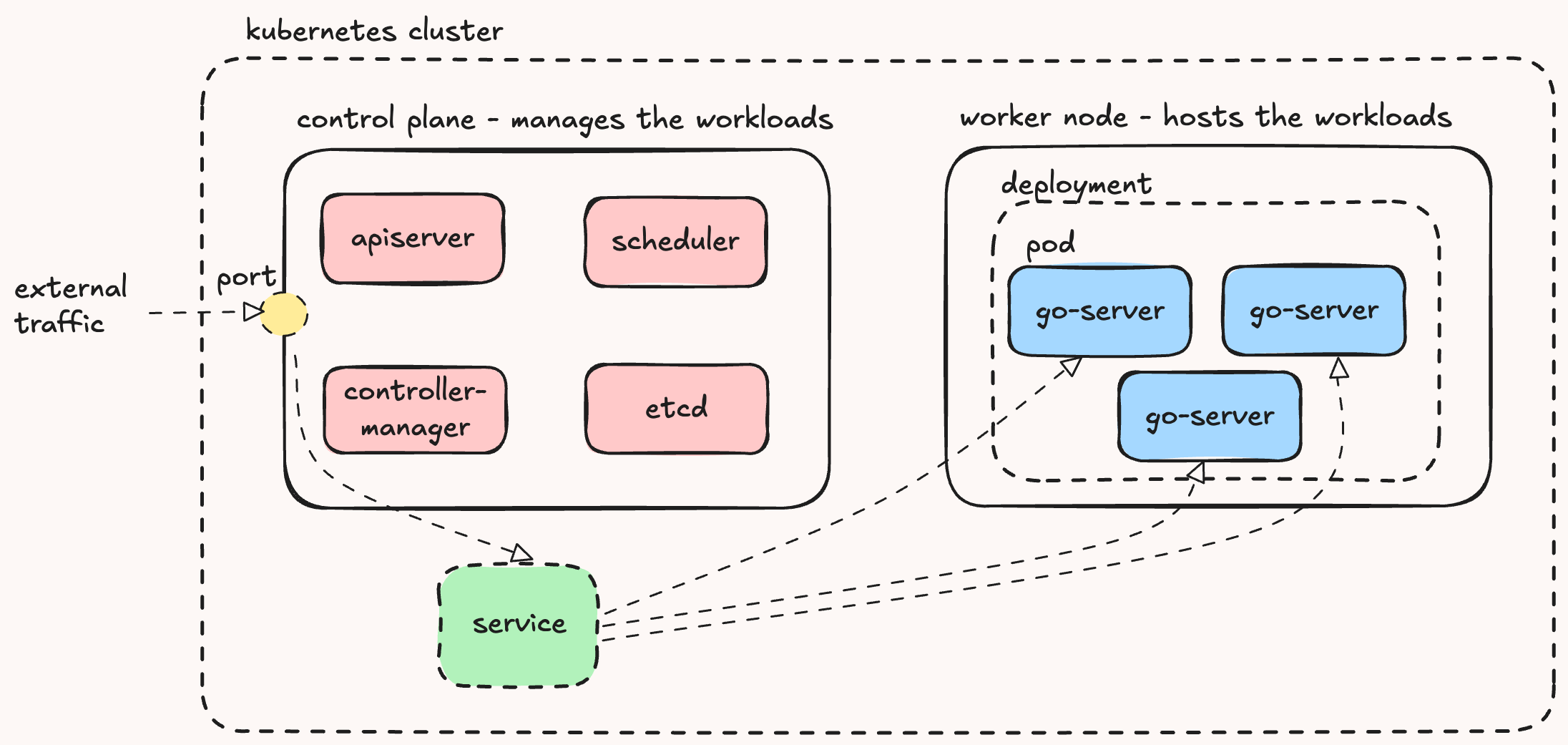

Thankfully, Kubernetes handles this for us. It orchestrates containerized applications and takes care of scaling and failover. Here's a quick rundown of the core components we'll be working with to deploy our application:

- Pods are the basic units of deployment. Kubernetes runs containers inside Pods and monitors them for failures.

- Deployments declares the desired count of Pods for your application. Kubernetes will ensure that this count is maintained.

- Services expose Deployments to the outside world, allowing external traffic to reach the running Pods.

- Clusters are the backbone of Kubernetes. They run all the above. Ours will have two

nodes:

- A control-plane node that manages and schedules workloads.

- A worker node that actually runs the Pods.

Visually, these components look like:

Creating a deployment

To deploy our application to k8s, we will create a deployment. Our deployment will manage

3 replicas of our server, where each replica runs our docker image in a pod.

Kubernetes is a declarative system, which means we tell it what we want and it will ensure that it is

achieved. Our deployment will declare:

- Pods managed by the deployment

- The desired pod replica count

- The pod itself, and the image it runs

deployment.yaml, add:

apiVersion: apps/v1

kind: Deployment

metadata:

name: go-k8s-server

spec:

replicas: 3 # Number of Pod replicas to maintain

selector:

matchLabels:

app: go-k8s-server # Manages Pods with this label

# Define our Pods

template:

metadata:

labels:

app: go-k8s-server # Pod label (must match the selector above)

spec:

containers:

- name: go-k8s-server

image: go-server:latest # Image to run in the container.

imagePullPolicy: IfNotPresent # Use local image if available.

ports:

- containerPort: 8080 # Document the port the container listens on.Creating a service

To expose our application outside our cluster, we will create a service. We will use a

NodePort service, which will expose our application on a static port on every node in the

cluster.

Our service will declare:

- The pods to distribute requests to

- The port to expose on the node to the outside world

- The port to listen on inside the cluster

- The port to forward traffic to on the pod

In service.yaml, add:

apiVersion: v1

kind: Service

metadata:

name: go-k8s-server

spec:

type: NodePort

selector:

app: go-k8s-server # Selects Pods with this label

ports:

- name: http

port: 80 # Listen on port 80

targetPort: 8080 # Forward traffic to port 8080 on the pods.

nodePort: 30000 # Declare the port opened on the node.Kubernetes will expose our service on a static port (30000) on every node in the cluster.

Internally, the service listens on port 80 and forwards traffic to port 8080 on

matching pods, where our Go server is running.

Creating a cluster

We need a k8s cluster to deploy our application on. An easy way to create a cluster locally is via kind. Kind spins up a kubernetes cluster using docker containers.

Let's configure our cluster to have a control-plane node and a worker node. In kind-config.yaml add:

apiVersion: kind.x-k8s.io/v1alpha4

kind: Cluster

# Specifies the nodes in our cluster.

nodes:

- role: control-plane

# Expose port 30000 from the kind container. See note below.

extraPortMappings:

- containerPort: 30000

hostPort: 8080

listenAddress: "0.0.0.0"

protocol: tcp

- role: workerIn our kind-config.yaml, we map the control-plane node's port 30000 to our machine's

localhost:8080. This allows us to curl localhost:8080,

which sends traffic into the cluster via the NodePort.

Let's create a cluster with this configuration.

> kind create cluster --config kind-config.yamlVerify whether your cluster was successfully created.

❯ kind get clusters

kind

❯ kind get nodes

kind-control-plane

kind-workerRunning our application

We have everything in place. First, lets make our docker image available to nodes on our cluster. Run:

> kind load docker-image go-serverNow, lets deploy our application. Run:

> kubectl apply -f deployment.yaml

deployment.apps/go-k8s-server createdThis will create three replicas of our Go server pod, each running the containerized server on port

8080.

> kubectl get pods

NAME READY STATUS RESTARTS AGE

go-k8s-server-56dcff97b8-7tbss 1/1 Running 0 13m

go-k8s-server-56dcff97b8-82q87 1/1 Running 0 13m

go-k8s-server-56dcff97b8-gd794 1/1 Running 0 13mNext, apply the service:

> kubectl apply -f service.yaml

service/go-k8s-server createdThis sets up a NodePort service that will distribute incoming requests across the available

pods.

> kubectl get service

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

go-k8s-server NodePort 10.96.242.102 80:30000/TCP 14m Now let's test it out!

> curl localhost:8080

Hello from go-k8s-server-56dcff97b8-gd794

> curl localhost:8080

Hello from go-k8s-server-56dcff97b8-82q87

> curl localhost:8080

Hello from go-k8s-server-56dcff97b8-7tbssOur service is load-balancing incoming requests across all our pods automatically! Let's delete a pod at random:

> kubectl delete pod go-k8s-server-56dcff97b8-82q87Now let's get pods again,

> kubectl get pods

NAME READY STATUS RESTARTS AGE

go-k8s-server-56dcff97b8-7tbss 1/1 Running 0 17m

go-k8s-server-56dcff97b8-gd794 1/1 Running 0 17m

go-k8s-server-56dcff97b8-jvh87 1/1 Running 0 3sDo you see the most recent pod? In the blink of an eye, Kubernetes spun up a new pod to maintain the desired replica count without us having to do anything.

Conclusion

We started with a simple Go server, containerized it using Docker, deployed it onto a local Kubernetes cluster and exposed it as a fault-tolerant service. Most importantly, we did all this by understanding the purpose behind every component, configuration and command.

There is a lot more we could do with this. We can deploy our application over multiple physical nodes, persist state across pod restarts and deploy multiple applications that talk to each other. Having a solid understanding of the basics will allow you to do this confidently.

I hope you learned as much as I did uncovering every single line of docker and kubernetes configuration. If I missed something, please let me know.